Why Do People Believe Stupid Stuff, Even When They're Confronted With the Truth?

This story is cross-posted from You Are Not So Smart.

The Truth: When your deepest convictions are challenged by contradictory evidence, your beliefs get stronger.

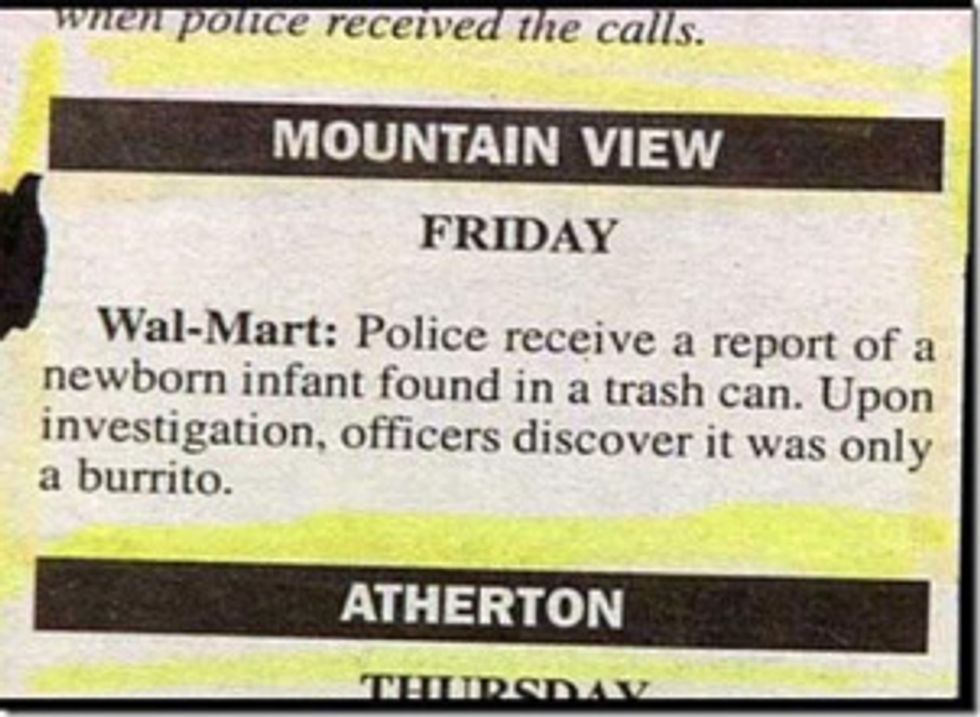

Wired, The New York Times, Backyard Poultry Magazine – they all do it. Sometimes, they screw up and get the facts wrong. In ink or in electrons, a reputable news source takes the time to say “my bad.”

If you are in the news business and want to maintain your reputation for accuracy, you publish corrections. For most topics this works just fine, but what most news organizations don’t realize is a correction can further push readers away from the facts if the issue at hand is close to the heart. In fact, those pithy blurbs hidden on a deep page in every newspaper point to one of the most powerful forces shaping the way you think, feel and decide – a behavior keeping you from accepting the truth.

In 2006, Brendan Nyhan and Jason Reifler at The University of Michigan and Georgia State University created fake newspaper articles about polarizing political issues. The articles were written in a way which would confirm a widespread misconception about certain ideas in American politics. As soon as a person read a fake article, researchers then handed over a true article which corrected the first. For instance, one article suggested the United States found weapons of mass destruction in Iraq. The next said the U.S. never found them, which was the truth. Those opposed to the war or who had strong liberal leanings tended to disagree with the original article and accept the second. Those who supported the war and leaned more toward the conservative camp tended to agree with the first article and strongly disagree with the second. These reactions shouldn’t surprise you. What should give you pause though is how conservatives felt about the correction. After reading that there were no WMDs, they reported being even more certain than before there actually were WMDs and their original beliefs were correct.

Once something is added to your collection of beliefs, you protect it from harm. You do it instinctively and unconsciously when confronted with attitude-inconsistent information. Just as confirmation bias shields you when you actively seek information, the backfire effect defends you when the information seeks you, when it blindsides you. Coming or going, you stick to your beliefs instead of questioning them. When someone tries to correct you, tries to dilute your misconceptions, it backfires and strengthens them instead. Over time, the backfire effect helps make you less skeptical of those things which allow you to continue seeing your beliefs and attitudes as true and proper.

In 1976, when Ronald Reagan was running for president of the United States, he often told a story about a Chicago woman who was scamming the welfare system to earn her income.

Reagan said the woman had 80 names, 30 addresses and 12 Social Security cards which she used to get food stamps along with more than her share of money from Medicaid and other welfare entitlements. He said she drove a Cadillac, didn’t work and didn’t pay taxes. He talked about this woman, who he never named, in just about every small town he visited, and it tended to infuriate his audiences. The story solidified the term “Welfare Queen” in American political discourse and influenced not only the national conversation for the next 30 years, but public policy as well. It also wasn’t true.

Despite the debunking and the passage of time, the story is still alive. The imaginary lady who Scrooge McDives into a vault of foodstamps between naps while hardworking Americans struggle down the street still appears every day on the Internet. The memetic staying power of the narrative is impressive. Some version of it continues to turn up every week in stories and blog posts about entitlements even though the truth is a click away.

Psychologists call stories like these narrative scripts, stories that tell you what you want to hear, stories which confirm your beliefs and give you permission to continue feeling as you already do. If believing in welfare queens protects your ideology, you accept it and move on. You might find Reagan’s anecdote repugnant or risible, but you’ve accepted without question a similar anecdote about pharmaceutical companies blocking research, or unwarranted police searches, or the health benefits of chocolate. You’ve watched a documentary about the evils of…something you disliked, and you probably loved it. For every Michael Moore documentary passed around as the truth there is an anti-Michael Moore counter documentary with its own proponents trying to convince you their version of the truth is the better choice.

A great example of selective skepticism is the website literallyunbelievable.org. They collect Facebook comments of people who believe articles from the satire newspaper The Onion are real. Articles about Oprah offering a select few the chance to be buried with her in an ornate tomb, or the construction of a multi-billion dollar abortion supercenter, or NASCAR awarding money to drivers who make homophobic remarks are all commented on with the same sort of “yeah, that figures” outrage. As the psychologist Thomas Gilovich said, “”When examining evidence relevant to a given belief, people are inclined to see what they expect to see, and conclude what they expect to conclude…for desired conclusions, we ask ourselves, ‘Can I believe this?,’ but for unpalatable conclusions we ask, ‘Must I believe this?’”

This is why hardcore doubters who believe Barack Obama was not born in the United States will never be satisfied with any amount of evidence put forth suggesting otherwise. When the Obama administration released his long-form birth certificate in April of 2011, the reaction from birthers was as the backfire effect predicts. They scrutinized the timing, the appearance, the format – they gathered together online and mocked it. They became even more certain of their beliefs than before. The same has been and will forever be true for any conspiracy theory or fringe belief. Contradictory evidence strengthens the position of the believer. It is seen as part of the conspiracy, and missing evidence is dismissed as part of the coverup.

This helps explain how strange, ancient and kooky beliefs resist science, reason and reportage. It goes deeper though, because you don’t see yourself as a kook. You don’t think thunder is a deity going for a 7-10 split. You don’t need special underwear to shield your libido from the gaze of the moon. Your beliefs are rational, logical and fact-based, right?

Well…consider a topic like spanking. Is it right or wrong? Is it harmless or harmful? Is it lazy parenting or tough love? Science has an answer, but let’s get to that later. For now, savor your emotional reaction to the issue and realize you are willing to be swayed, willing to be edified on a great many things, but you keep a special set of topics separate.

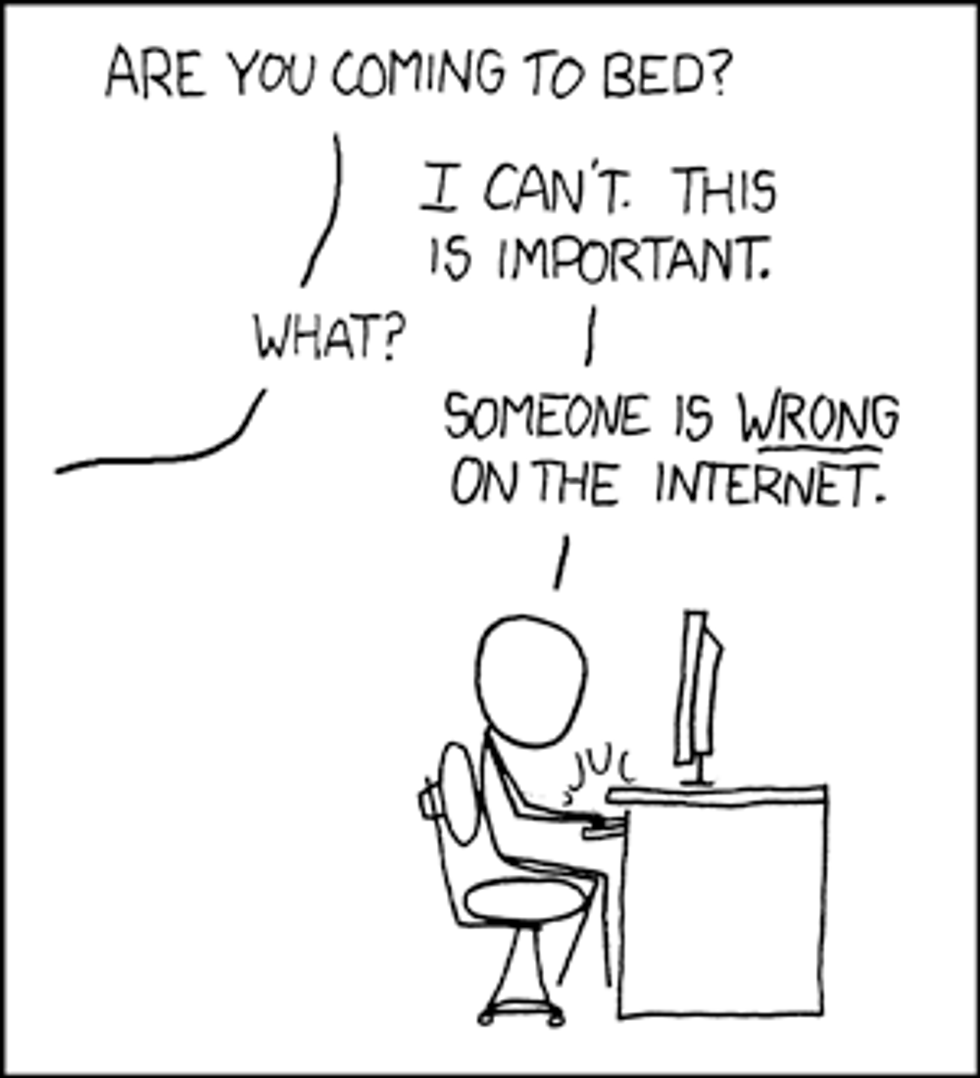

Source: www.xkcd.com

The last time you got into, or sat on the sidelines of, an argument online with someone who thought they knew all there was to know about health care reform, gun control, gay marriage, climate change, sex education, the drug war, Joss Whedon or whether or not 0.9999 repeated to infinity was equal to one – how did it go?

Did you teach the other party a valuable lesson? Did they thank you for edifying them on the intricacies of the issue after cursing their heretofore ignorance, doffing their virtual hat as they parted from the keyboard a better person?

No, probably not. Most online battles follow a similar pattern, each side launching attacks and pulling evidence from deep inside the web to back up their positions until, out of frustration, one party resorts to an all-out ad hominem nuclear strike. If you are lucky, the comment thread will get derailed in time for you to keep your dignity, or a neighboring commenter will help initiate a text-based dogpile on your opponent.

What should be evident from the studies on the backfire effect is you can never win an argument online. When you start to pull out facts and figures, hyperlinks and quotes, you are actually making the opponent feel as though they are even more sure of their position than before you started the debate. As they match your fervor, the same thing happens in your skull. The backfire effect pushes both of you deeper into your original beliefs.

Have you ever noticed the peculiar tendency you have to let praise pass through you, but feel crushed by criticism? A thousand positive remarks can slip by unnoticed, but one “you suck” can linger in your head for days. One hypothesis as to why this and the backfire effect happens is that you spend much more time considering information you disagree with than you do information you accept. Information which lines up with what you already believe passes through the mind like a vapor, but when you come across something which threatens your beliefs, something which conflicts with your preconceived notions of how the world works, you seize up and take notice. Some psychologists speculate there is an evolutionary explanation. Your ancestors paid more attention and spent more time thinking about negative stimuli than positive because bad things required a response. Those who failed to address negative stimuli failed to keep breathing.

In 1992, Peter Ditto and David Lopez conducted a study in which subjects dipped little strips of paper into cups filled with saliva. The paper wasn’t special, but the psychologists told half the subjects the strips would turn green if he or she had a terrible pancreatic disorder and told the other half it would turn green if they were free and clear. For both groups, they said the reaction would take about 20 seconds. The people who were told the strip would turn green if they were safe tended to wait much longer to see the results, far past the time they were told it would take. When it didn’t change colors, 52 percent retested themselves. The other group, the ones for whom a green strip would be very bad news, tended to wait the 20 seconds and move on. Only 18 percent retested.

When you read a negative comment, when someone shits on what you love, when your beliefs are challenged, you pore over the data, picking it apart, searching for weakness. The cognitive dissonance locks up the gears of your mind until you deal with it. In the process you form more neural connections, build new memories and put out effort – once you finally move on, your original convictions are stronger than ever.

When our bathroom scale delivers bad news, we hop off and then on again, just to make sure we didn’t misread the display or put too much pressure on one foot. When our scale delivers good news, we smile and head for the shower. By uncritically accepting evidence when it pleases us, and insisting on more when it doesn’t, we subtly tip the scales in our favor.

- Psychologist Dan Gilbert in The New York Times

The backfire effect is constantly shaping your beliefs and memory, keeping you consistently leaning one way or the other through a process psychologists call biased assimilation. Decades of research into a variety of cognitive biases shows you tend to see the world through thick, horn-rimmed glasses forged of belief and smudged with attitudes and ideologies. When scientists had people watch Bob Dole debate Bill Clinton in 1996, they found supporters before the debate tended to believe their preferred candidate won. In 2000, when psychologists studied Clinton lovers and haters throughout the Lewinsky scandal, they found Clinton lovers tended to see Lewinsky as an untrustworthy homewrecker and found it difficult to believe Clinton lied under oath. The haters, of course, felt quite the opposite. Flash forward to 2011, and you have Fox News and MSNBC battling for cable journalism territory, both promising a viewpoint which will never challenge the beliefs of a certain portion of the audience. Biased assimilation guaranteed.

Biased assimilation doesn’t only happen in the presence of current events. Michael Hulsizer of Webster University, Geoffrey Munro at Towson, Angela Fagerlin at the University of Michigan, and Stuart Taylor at Kent State conducted a study in 2004 in which they asked liberals and conservatives to opine on the 1970 shootings at Kent State where National Guard soldiers fired on Vietnam War demonstrators killing four and injuring nine.

As with any historical event, the details of what happened at Kent State began to blur within hours. In the years since, books and articles and documentaries and songs have plotted a dense map of causes and motivations, conclusions and suppositions with points of interest in every quadrant. In the weeks immediately after the shooting, psychologists surveyed the students at Kent State who witnessed the event and found that 6 percent of the liberals and 45 percent of the conservatives thought the National Guard was provoked. Twenty-five years later, they asked current students what they thought. In 1995, 62 percent of liberals said the soldiers committed murder, but only 37 percent of conservatives agreed. Five years later, they asked the students again and found conservatives were still more likely to believe the protesters overran the National Guard while liberals were more likely to see the soldiers as the aggressors. What is astonishing, is they found the beliefs were stronger the more the participants said they knew about the event. The bias for the National Guard or the protesters was stronger the more knowledgeable the subject. The people who only had a basic understanding experienced a weak backfire effect when considering the evidence. The backfire effect pushed those who had put more thought into the matter farther from the gray areas.

Geoffrey Munro at the University of California and Peter Ditto at Kent State University concocted a series of fake scientific studies in 1997. One set of studies said homosexuality was probably a mental illness. The other set suggested homosexuality was normal and natural. They then separated subjects into two groups; one group said they believed homosexuality was a mental illness and one did not. Each group then read the fake studies full of pretend facts and figures suggesting their worldview was wrong. On either side of the issue, after reading studies which did not support their beliefs, most people didn’t report an epiphany, a realization they’ve been wrong all these years. Instead, they said the issue was something science couldn’t understand. When asked about other topics later on, like spanking or astrology, these same people said they no longer trusted research to determine the truth. Rather than shed their belief and face facts, they rejected science altogether.

The human understanding when it has once adopted an opinion draws all things else to support and agree with it. And though there be a greater number and weight of instances to be found on the other side, yet these it either neglects and despises, or else-by some distinction sets aside and rejects, in order that by this great and pernicious predetermination the authority of its former conclusion may remain inviolate

- Francis Bacon

Science and fiction once imagined the future in which you now live. Books and films and graphic novels of yore featured cyberpunks surfing data streams and personal communicators joining a chorus of beeps and tones all around you. Short stories and late-night pocket-protected gabfests portended a time when the combined knowledge and artistic output of your entire species would be instantly available at your command, and billions of human lives would be connected and visible to all who wished to be seen.

So, here you are, in the future surrounded by computers which can deliver to you just about every fact humans know, the instructions for any task, the steps to any skill, the explanation for every single thing your species has figured out so far. This once imaginary place is now your daily life.

So, if the future we were promised is now here, why isn’t it the ultimate triumph of science and reason? Why don’t you live in a social and political technotopia, an empirical nirvana, an Asgard of analytical thought minus the jumpsuits and neon headbands where the truth is known to all?

Among the many biases and delusions in between you and your microprocessor-rich, skinny-jeaned Arcadia is a great big psychological beast called the backfire effect. It’s always been there, meddling with the way you and your ancestors understood the world, but the Internet unchained its potential, elevated its expression, and you’ve been none the wiser for years.

As social media and advertising progresses, confirmation bias and the backfire effect will become more and more difficult to overcome. You will have more opportunities to pick and choose the kind of information which gets into your head along with the kinds of outlets you trust to give you that information. In addition, advertisers will continue to adapt, not only generating ads based on what they know about you, but creating advertising strategies on the fly based on what has and has not worked on you so far. The media of the future may be delivered based not only on your preferences, but on how you vote, where you grew up, your mood, the time of day or year – every element of you which can be quantified. In a world where everything comes to you on demand, your beliefs may never be challenged.

Three thousand spoilers per second rippled away from Twitter in the hours before Barack Obama walked up to his presidential lectern and told the world Osama bin Laden was dead.

Novelty Facebook pages, get-rich-quick websites and millions of emails, texts and instant messages related to the event preceded the official announcement on May 1, 2011. Stories went up, comments poured in, search engines burned white hot. Between 7:30 and 8:30 p.m. on the first day, Google searches for bin Laden saw a 1 million percent increase from the number the day before. Youtube videos of Toby Keith and Lee Greenwood started trending. Unprepared news sites sputtered and strained to deliver up page after page of updates to a ravenous public.

It was a dazzling display of how much the world of information exchange changed in the years since September of 2001 except in one predictable and probably immutable way. Within minutes of learning about Seal Team Six, the headshot tweeted around the world and the swift burial at sea, conspiracy theories began to bounce against the walls of our infinitely voluminous echo chamber. Days later, when the world learned they would be denied photographic proof, the conspiracy theories grew legs, left the ocean and evolved into self-sustaining undebunkable life forms.

As information technology progresses, the behaviors you are most likely to engage in when it comes to belief, dogma, politics and ideology seem to remain fixed. In a world blossoming with new knowledge, burgeoning with scientific insights into every element of the human experience, like most people, you still pick and choose what to accept even when it comes out of a lab and is based on 100 years of research.

So, how about spanking? After reading all of this, do you think you are ready to know what science has to say about the issue? Here’s the skinny - psychologists are still studying the matter, but the current thinking says spanking generates compliance in children under seven if done infrequently, in private and using only the hands. Now, here’s a slight correction: other methods of behavior modification like positive reinforcement, token economies, time out and so on are also quite effective and don’t require any violence.

Reading those words, you probably had a strong emotional response. Now that you know the truth, have your opinions changed?